(This post originally appeared on the Audiokinetic Blog)

The spatial acoustics of NieR:Automata, and how we used Wwise to support various forms of gameplay- Part 2

Continues from Part 1.

Wwise controls that support a wide variety of gameplay

As I mentioned at the start, the camera position frequently changes, from the standard rear viewpoint to other views such as the top view, side view, and shooting view, so the spatial acoustical effect needs to change and adapt to any of those possibilities.

Interactively changing the listener position

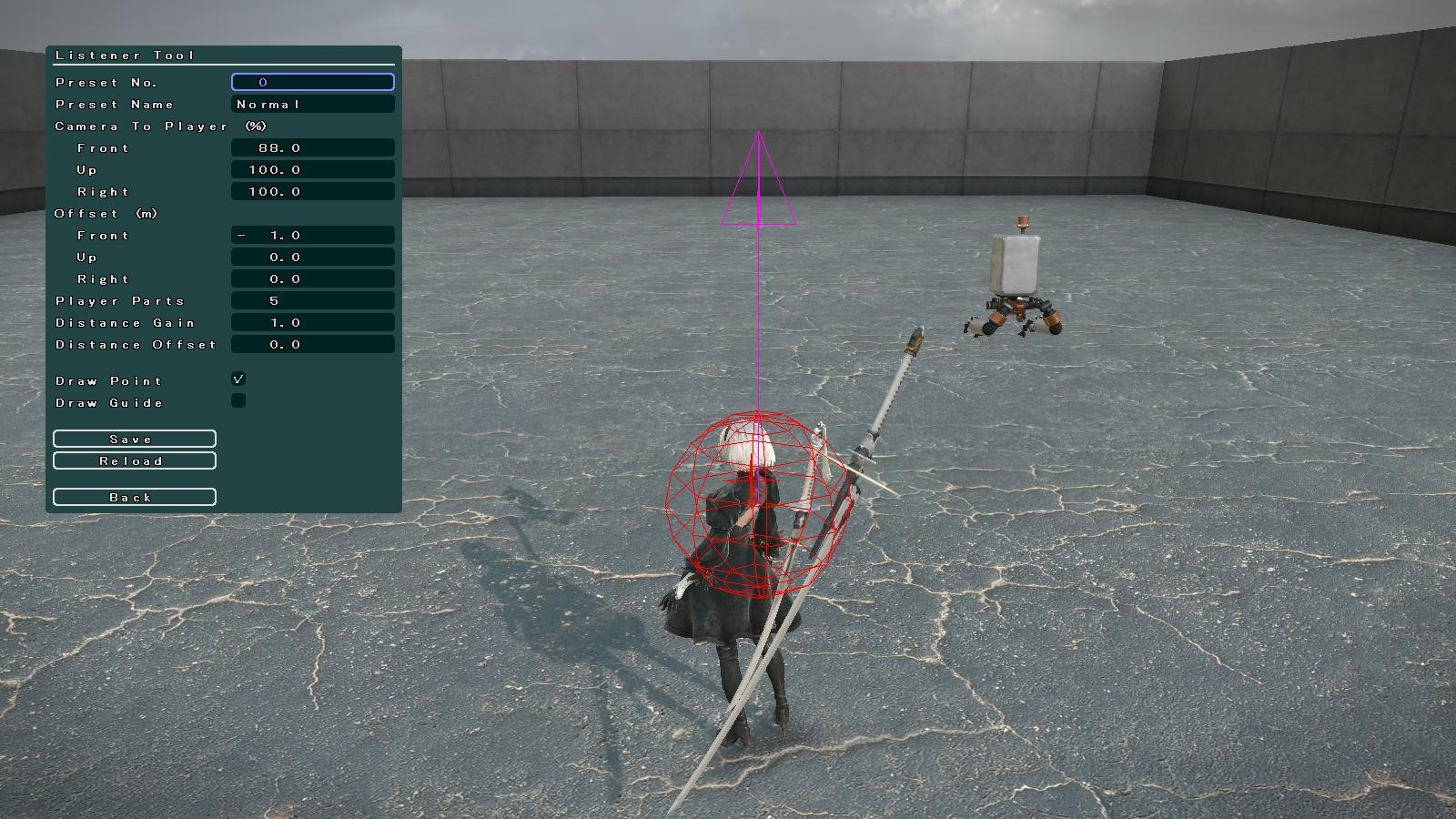

First of all, we needed to figure out where to set the default listener position, and as we tried to balance the acoustics of the various camera angles to express the excitement of the ingame action, we concluded that the best default listener position was right by the player.

Because the listener is near the player character, even if the camera angle changes, the basic balance of the mix and the attenuation does not change drastically, so the user can continue to play the game without feeling anything acoustically strange.

The default listener position

The default listener positionExcluding the shooting view, the default listener position was mostly appropriate, including for the top view, back view, and most of the side views, but in some examples, such as cutscenes, special measures had to be taken.

This scene is rather unique, because 2B is in a theatre, watching her enemies the machine lifeforms performing a play. Since 2B is not close enough to the stage, the sounds from the play can’t be heard at the intended volume if the listener is kept at its default position. For such scenes, we put in a function that allows the sound designer to switch the listener position as needed.

Distance between the stage and the player

Distance between the stage and the player(The small red dot in the distance is the listener position)

Moving the listener position from default to center of stage

Moving the listener position from default to center of stage(Preset No. 11)

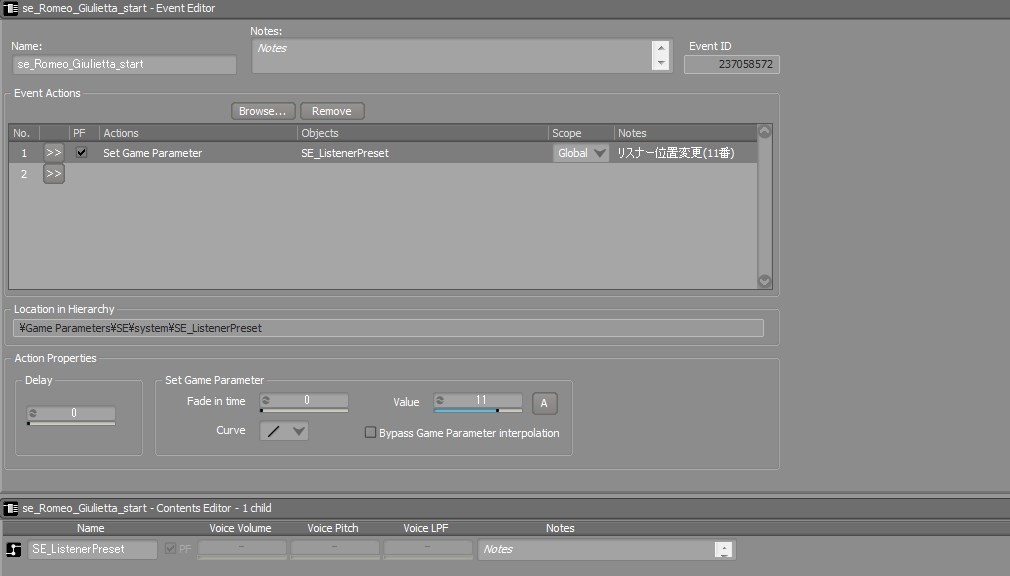

We prepared several listener positions as presets, and the system allows the sound designer to switch it in a specified area, or with a Wwise Event.

The Event Editor in Wwise for the previous scene

The Event Editor in Wwise for the previous scene(Changing the listener preset selection)

When the listener position changes, the parameters are interpolated to ensure that sound with 3D positions don’t jump out too much. Seamless audio transitions at these kinds of switching points were especially important to Yoko-san, the director, so we tried to have smooth and natural acoustic transitions throughout the entire game.

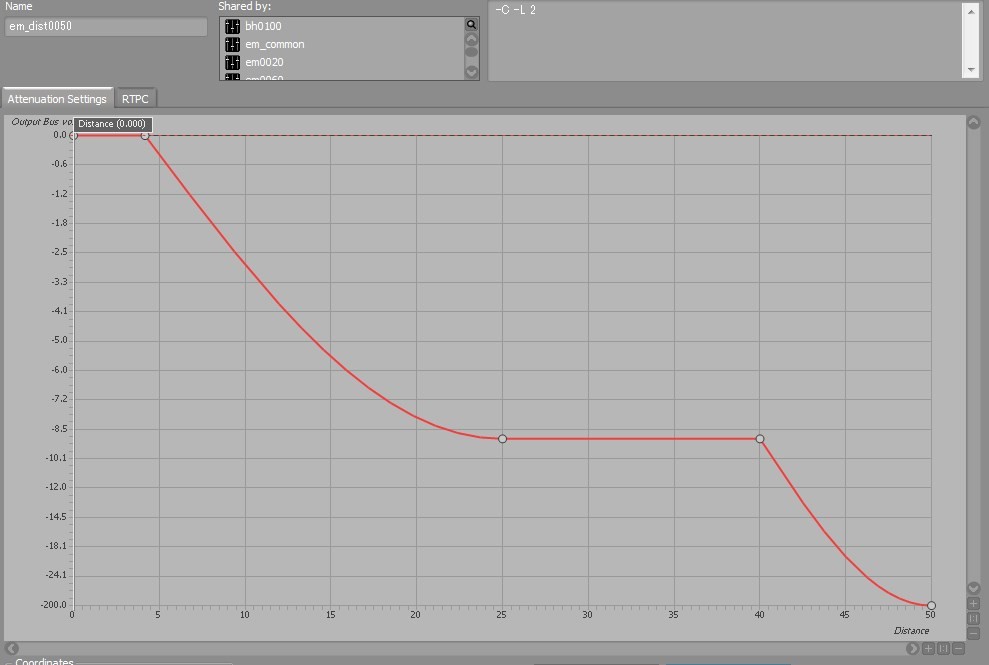

Unique attenuations

In the attenuation settings, there are special attenuation curves which are used for unique camera angles.

In this actual in-game scene, the camera is far from the player and overlooks the entire stage, while the gameplay continues. You can barely see it, but the player is at the small red dot,

in the middle of this shot. Here, the listener is placed further away from the stage than the default position alongside the player, so the sounds seem slightly further away.

The player character, and the distance from the listener position

The player character, and the distance from the listener position(The red dot on the bottom right represents the listener position)

The distance between the listener position and the player is always fixed, so the smaller volume gives the feeling that it is a bit far away, but because the enemy character here is much

further away than usual, it will soon be out of attenuation range, and then, the sound effects of this enemy character will be barely audible.

In terms of accuracy, this would be the correct response, but the player will no longer be able to notice the enemy character, and this can sometimes be stressful. (Even in the graphic

representation, the enemy is small and difficult to see, because it is far away.)

We solved this by setting a slightly unique attenuation curve that will not allow the sound to be attenuated when it is beyond a certain distance, and this interpolates and resolves any

auditory discomfort the user may experience.

Controlling sound emissions from multiple sound sources

Finally, let us introduce the sound limiting system that we deployed for this game. In this title, some scenes have so many enemy characters appearing that if all their sounds were played back without restriction, we would run out of CPU.

We adjusted the sound system so that the number of simultaneous sounds would always be kept between 50 and 60, and we had a two-step approach to controlling the sounds being emitted.

- Before Wwise Events are called, the game program uses its own controls to limit Events

- Wwise controls the number of simultaneous sounds after the Events emit sounds

Step 2 is a standard procedure using the Voice Limit feature and prioritization based on Distance values, but here, we will explain the controlling system of Step 1.

There are three main functions at work: culling, limits on passing sounds, and limits on simultaneous sound playback, all done before calling Wwise Events. By introducing this process, we can avoid overwhelming Wwise with a large number of unnecessary Wwise Event commands, which could result in hangups.

These control functions are written in as commands in the memo area of the Wwise Events or attenuation parameters.

Command inserted for an attenuation setting

Command inserted for an attenuation setting(-C = culling, –L2 = simultaneous sound limiter)

Control by culling

First, a culling process eliminates sounds emitted further than the attenuation’s Max distance. This process is written into many attenuation parameters.

| Command | Parameter | Description |

|---|---|---|

| -C [level] [distance] | level 0-15 (def 1), distance 0-65535 (def 0) | If the level is set to 1 or above, then Events that emit sound at a distance further away than Maxdistance will not be called. Events that emit sound at a greater distance than the distance value will be culled so that they are called once every “Level “. By definition, it cannot coexist with -P. |

Controlling Pass-By sound

Next, controlling sounds that are passing by. In this project, we use it to control standby loop sound effects, such as sounds of the enemy floating around, and other looped sound effects. It is not rare for these characters to be placed further away than the Max distance, so in that case, there will be no culling, and if the Wwise Event is called when it is far away, this system will delay the actual call for the Event until it is within the Max distance range.

| Command | Parameter | Description |

|---|---|---|

| -P [distance] | distance 0-65535 (def Max distance) | If an Event is called from a distance, the Event will actually be triggered when it is within the defined distance. By definition, it cannot coexist with -C. |

Controlling simultaneous sounds

Finally, of the Wwise Events that are called and are within the MaxDistance range, if a specific Event is likely to be called a significant amount of times at the same time, the sounds emitted will be limited. If an Event with the same name is called repeatedly and within the specified frame, then the Events that occur later will not be called.

| Command | Parameter | Description |

|---|---|---|

| -L [frames] [target] | frame 0-63 (def 1) target g or o (def g) | If the frame’s value is set at 1 or above, when Events of the same name are called earlier than the frame value specified, the later Events will not be called. If the target value is set at 0, then a limit will be applied only if called by the same object (regardless of the parts number). |

All these controls can be set up and adjusted by the sound designer, without any help from the programmer, and ensuring this was the most important point for us.

Custom-made Wwise plug-ins that allow the audio to be more interactive

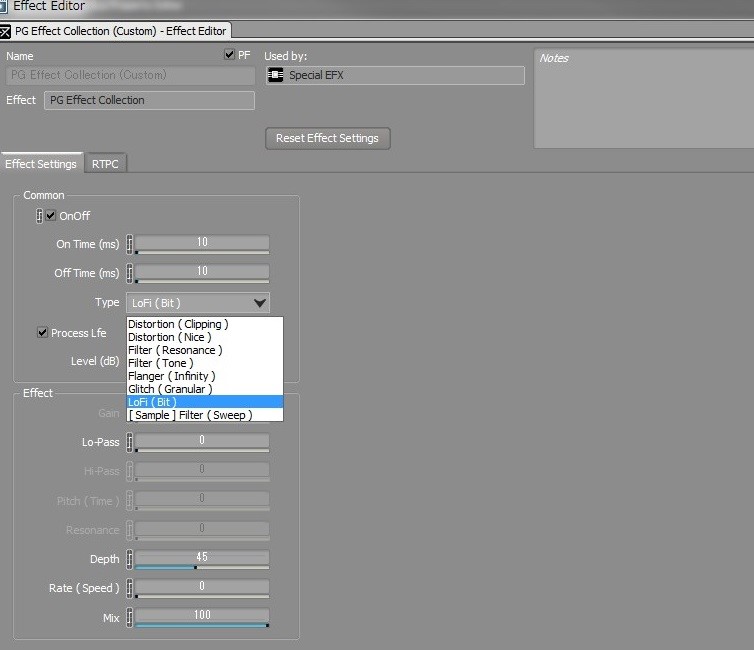

LoFi Plug-in

Lo-fi filters are relatively common, but creating a lo-fi sound that does not feel discomforting is difficult, so we think that being able to utilize such effects freely is one of the important roles of real-time audio.

Actually, lo-fi effects in NieR:Automata were used when the player encountered problems with the transmitter voice or their own senses, and it was used in many scenes, but the effects themselves are made to be very light, and they were inserted into many Audio Buses and the Actor Mixer. Also, the lo-fi effect is part of the Multi effector plug-in, and it actually has many different kinds of effects combined, such as distortion, filtering, and flanger, as shown below. It is a system that showcases the expertise of the co-author, Shuji Kohata, who has experience in making hardware guitar effects, and the sound can change quite effectively through simple operations, which is appreciated by our sound designers.

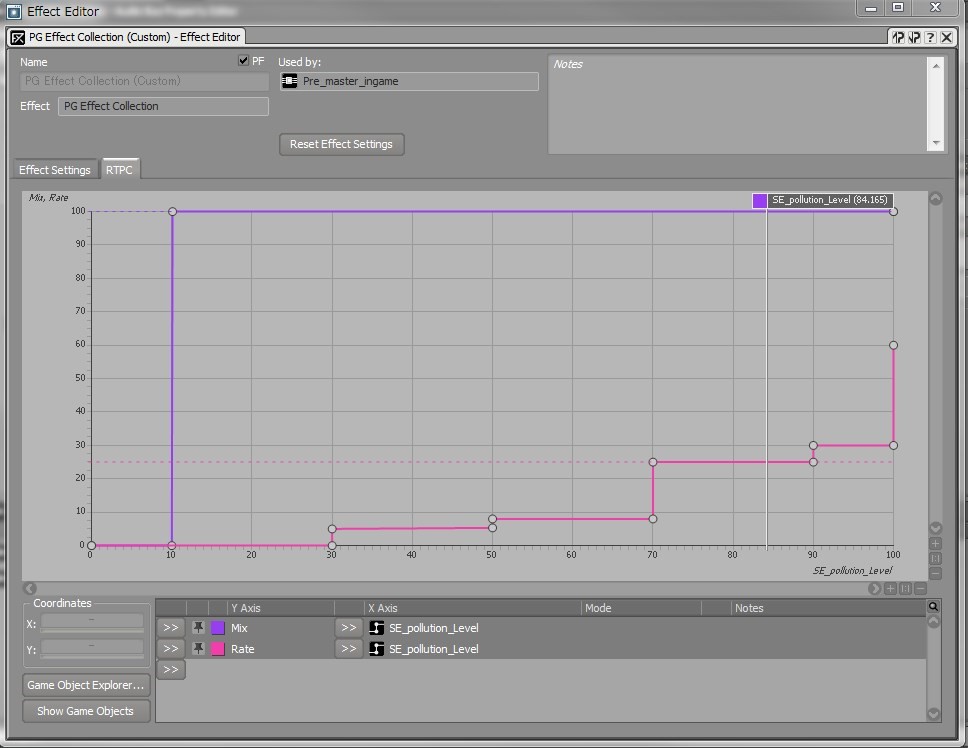

Lo-fi parameters for when the player character 2B is infected by a virus

Lo-fi parameters for when the player character 2B is infected by a virus*The contamination level is 0 on the left end, and it gets higher as you move to the right, while the lo-fi effect becomes stronger.

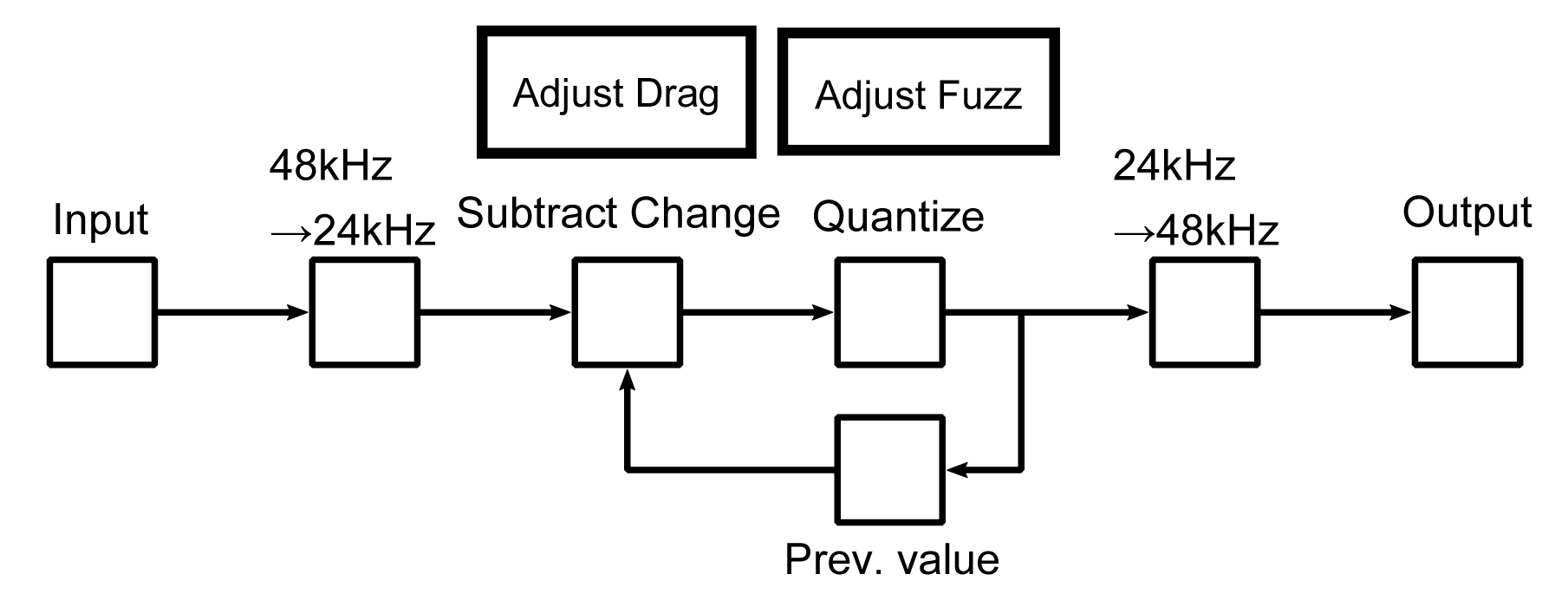

This is the DSP diagram for the lo-fi feature. This shows a monoral scenario, and when there are multiple channels, the same process is repeated for each channel.

lo-fi DSP Diagram

lo-fi DSP Diagram

Lo-fi processing

- The sampling rate is temporarily lowered 50%, or 24kHz.

(This is to reduce the processing load, and also because lo-fi does not need high sampling rates) - Make the value closer to the previous value, then quantize.

(The reason is to prevent excessive noise and roughness in sound, after quantizing)

Note that the closer to the previous value, clipping increases, and if the quantization is too rough, the noise and roughness of sound will increase. Overall, it is a simple system, but this process of making it closer to the previous value is important. - Turn back the sampling rate and output the result.

Even a standard process like this can make the game more immersive if you add an additional step or two.

Voice Changer Plug-in

While separate from interactive audio, the Voice Changer is also an important Wwise plug-in used to express the game’s world. When the player achieved certain in-game conditions, they can unlock the optional settings and apply effects to the voice of the player character.

The player characters are androids, and we had the idea that they should be able to set their own voices freely, so we introduced pitch shift and other variations such as a robot-sounding voice.

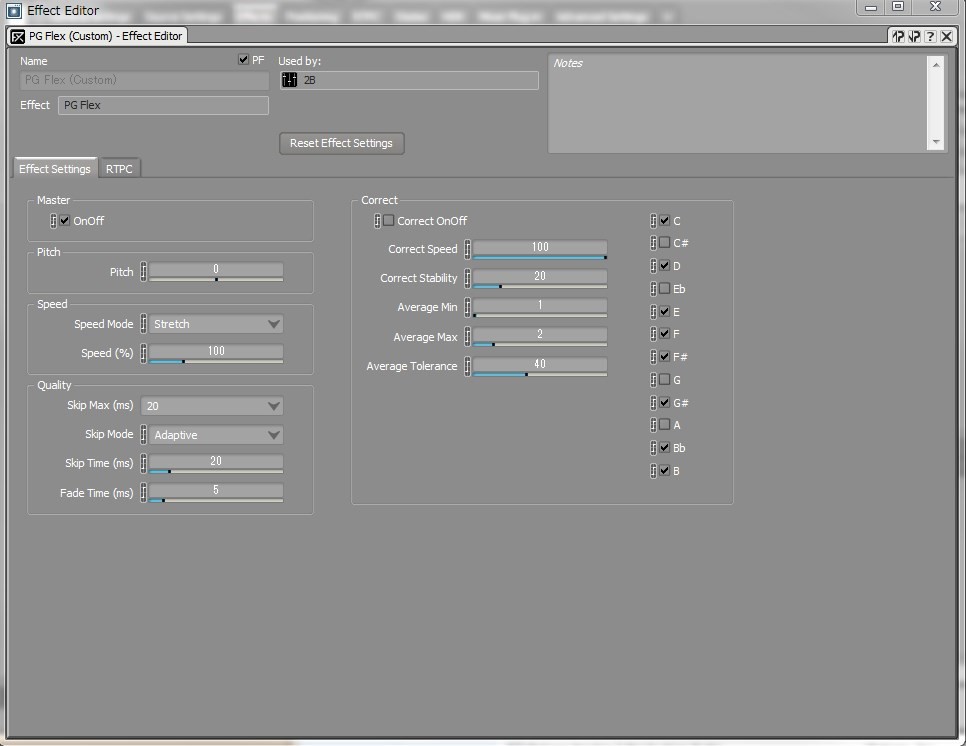

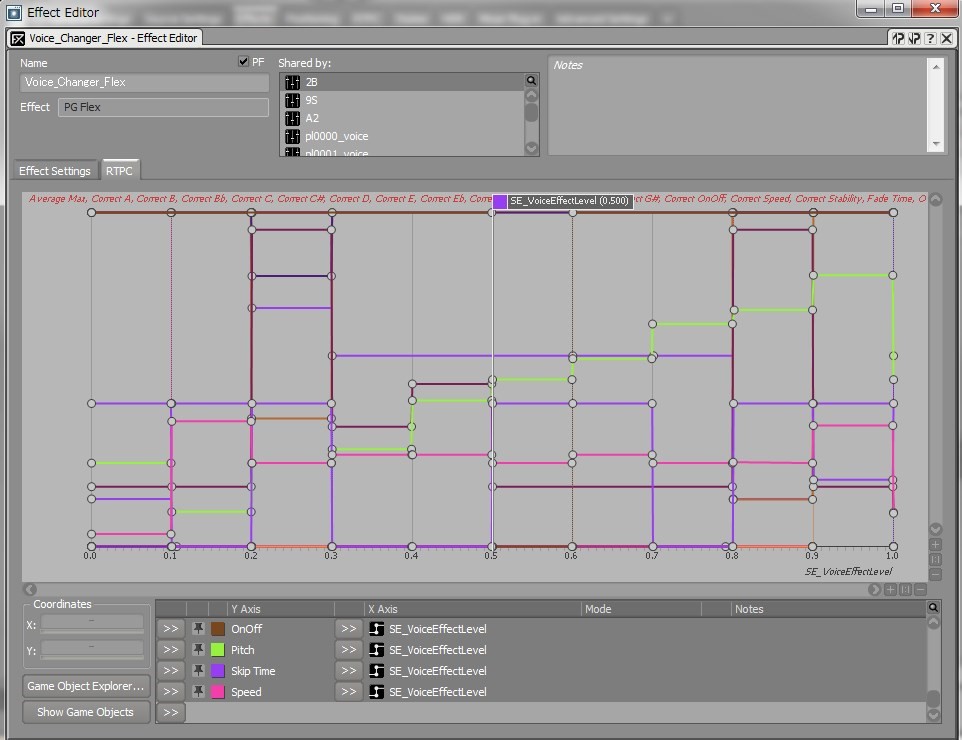

This is an effector that combines multiple functions, such as the pitch shifter, time stretch, glitch effect, and pitch collect.

In the actual game, we prepared 11 types of presets, and the parameter values were specified so that the change would be easy for the user to notice, and the effects would not overlap. Trying to decide on the presets was both fun and difficult. We have much respect for the people who come up with commercial effector and synthesizer presets…

Voice changer parameters used in this project

Voice changer parameters used in this project

Machine lifeforms’ voice effects

While this does not directly involve Wwise, we are often asked about how we created the voice effects for the machine lifeforms, so let us write a little about that.

The machine lifeforms appear as enemy characters, and each one has a voice effect applied to make them sound robotic. The crucial point in a robotic voice is aligning the pitch, and there are many kinds of commercial pitch correction effectors offering a wide range of options, so we tried several. We ended up using Nuendo’s Pitchcorrect, but the others also had good quality and enabled the pitch to be corrected neatly.

The reason we chose Nuendo is that depending on the original sound’s pitch variation, we got a slightly unique sound with some flickering characteristics which the others didn’t offer, and also because its Formant function had a digital feel not seen in the others, and the result was a slightly human touch to the digital effect. The flickering of the pitch also sounded like the machine lifeforms were trying, in vain, to copy the speech intonation of humans.

Voice processing plug-in for the machine lifeforms

Voice processing plug-in for the machine lifeformsPersonally, I found this important. In contrast to the ever-cool android 2B who didn’t show any emotion at all, the enemy’s machine lifeforms are robots but we wanted them to sound warm and emotional.

Actually, we recorded two versions of the machine lifeform voices, one with as constant a pitch as possible, and the other without pitch alignment and with normal intonations of speech, and in the end, we chose the latter.

After we decided on the pitch of the machine lifeforms, we used Delay, Flanger, and Speakerphone to make the voice sound like it was coming from an internal speaker.

Epilogue

Looking back at the development process, we can say that we succeeded in controlling many aspects of audio with Wwise. Being able to control all this with a single tool is very convenient for managing parameters and debugging the game, and it makes it easier to apply our experience to other projects.

We aimed to improve the quality of the game audio by focusing on acoustic spatial expressions, and the methods and plug-ins we used all have room for further development.

For example, Simple3D is a plug-in for creating 3D sounds, but we were limited to only using the position of sounds for the 3D effect. Now, I would like to try expressing 3D sounds while factoring in conditions such as the distance between the listener and the sound source. The same goes for K-verb, which could have even better acoustic properties, and by combining it with occlusion information, the system could be more encompassing.

Another challenge that we are currently undertaking is how to automatically allocate sound to motion, a process which is becoming increasingly common thanks to innovations in hardware, though this is not related to acoustic spatial expression. Our goal for the near future is not just eliminating simple manual labor, but developing a tool to uncover new ideas and methods.

There are many tools for game production, and there are many challenges. Our sound designers, composers, and programmers discuss such issues daily, and I think it must be the same in any gaming company. That is why we decided to write this blog post, so that game creators who are using Wwise around the world might find something interesting and helpful. It would be great if you can gain even the smallest of ideas or clues from our post.

Misaki Shindo

Misaki Shindo

After playing in an indies band and working at a musical instrument shop, Misaki joined PlatinumGames in 2008 as a sound designer. In the latest game, NieR:Automata, she was the lead sound designer in charge of sound effect creation, Wwise implementation, and building the sound effect system.

Shuji Kohata

Shuji Kohata

Shuji worked in an electronic instrument development firm before joining PlatinumGames in 2013. As the audio programmer, he oversees the technical aspects of acoustic effects. In NieR:Automata, he was in charge of system maintenance and audio effect implementation. Shuji’s objective is to research ways to express new sounds and utilize those results in future games, and he is continuously working to refine game audio technologies.